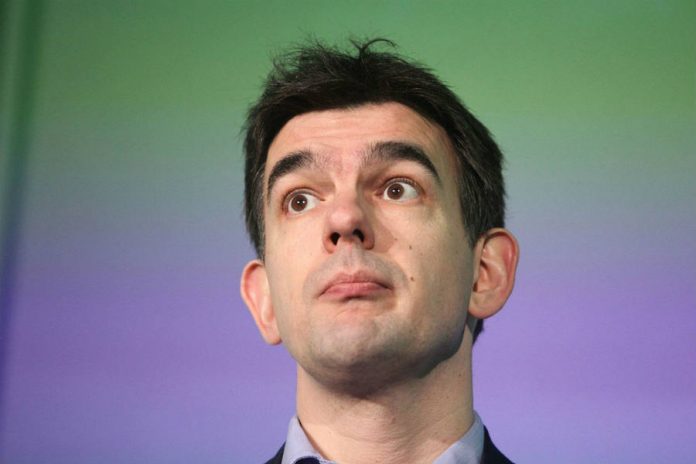

Google’s President of EMEA Business and Operations, Matt Brittin, publicly apologized for the ad scandal on YouTube this morning at the Advertising Week Europe event in London.

The video platform inadvertently featured ads by several British companies and organizations on videos posted by extremist groups and terrorists like the KKK and ISIS. Companies like The Guardian, the BBC, and others have since pulled their ads from YouTube.

Google’s platform took a hit on the stock market after distrust from advertisers continued to grow, and now they are seeking redemption before the public eye by promising they will strengthen their content policies to avoid future incidents.

Brands are worried Google is not doing enough to protect their image

The controversy started back in February when the British journal, The Times, published an investigation that revealed several companies and institutions had ads on YouTube that ran alongside videos featuring extremist content.

ISIS propaganda clips and footage featuring former Ku Klux Klan leader David Duke had publicity from Transport for London, the Financial Conduct Authority, The Guardian, the BBC, Honda, and L’Oreal.

Last week, a conglomerate of U.K. firms and entities collectively pulled their ads from YouTube after feeling themselves misrepresented by the platform when it paired them with this kind of channels.

The British government has also taken actions by suspending online campaigns on the popular video site, and more companies joined the movement over the weekend, distancing themselves from the Alphabet subsidiary.

“WE ACCEPT WE DON’T ALWAYS GET IT RIGHT, AND THAT SOMETIMES, ADS APPEAR WHERE THEY SHOULD NOT. WE’RE COMMITTED TO DOING BETTER, AND WILL MAKE CHANGES TO OUR POLICIES AND BRAND CONTROLS FOR ADVERTISERS,” a Google spokesman said.

Google and other tech giants have to step up against extremist content

Google has consistently increased the year after year efforts to monitor online content that affects their search engine results, fighting bad ads and malware-infected banners that pop up from time to time.

Initiatives on YouTube include the community-driven YouTube Heroes, a program for users to flag offensive content and escalate within the site’s infrastructure as trusted video reviewers.

After apologizing at the annual advertisement event in London, England this morning, Google Europe exec Matt Brittin promised there would be an announcement in the next couple of days with concrete actions.

Facebook, Microsoft, Twitter, and Google have pledged to fight inappropriate content from coming up online by flagging it automatically, much like they do with other offensive materials like child pornography.

There are complications to this approach, as not all the images or videos that seem to promote extremist movements are straightforwardly doing so. Some of this footage may be used in journalistic pieces or informative campaigns that have nothing to do with giving these groups a voice.

Source: Reuters