Yesterday, an article published at Google’s blog announced the coming of a new photo tool app called PhotoScan. The new addition will work as a standalone but integrate with Google Photos for storage. It is already available for Android and iOS.

PhotoScan will attempt not only to digitize a user’s real photos in high resolution but to create a whole new image using the picture. The app automatically adjusts features like edges, rotation, and color to make the photos look as if they came from a smartphone camera.

Along with PhotoScan, Google unveiled a new update for the Photos app, with a broad range of new filters and useful photo editing tools. The latest version of Photos is also waiting for photography enthusiasts to download it from the Play Store and App Store.

How does PhotoScan work?

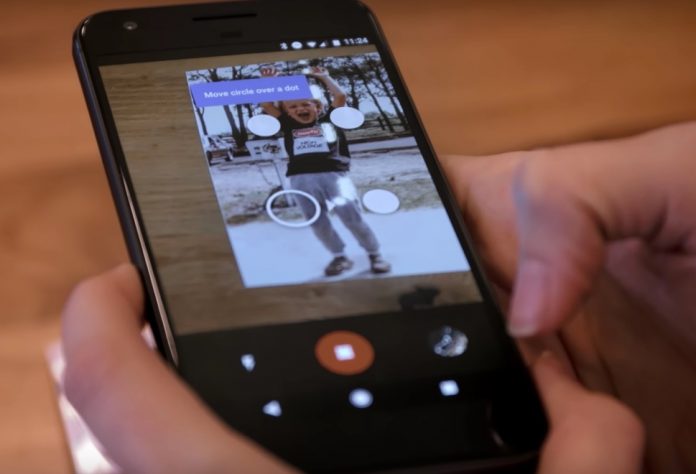

The PhotoScan interface looks like a regular smartphone camera, the only difference being users will point it only at real photos. Upon doing this, four white dots will appear on the surface of the actual picture once the phone recognizes it.

The user must then tap the four dots to allow the lens to fully comprehend the frame and dimensions of the photograph, which will allow the app to produce a digital scan. These dots also enable the phone to detect and correct glare spots found in the pictures, which form when users try to take a picture of a reflective surface, like the glossy paper of a photograph.

A little more insight into the inner workings of PhotoScan

The app works by using a process called “computational photography,” which extends to almost everything current users can do with smartphones today. Editing the picture’s exposure before taking it, making panoramic shots or applying filters, are all examples of computational photography.

The app undergoes the following process to scan a picture without any glare. The four dots that appear in the interface are meant to get the user to move his or her phone so the app can take snaps of different areas of the picture without the glare, which usually appears when the phone aligns with the picture in a completely vertical angle (when it’s right on top).

After taking the four shots (one for each white dot), PhotoScan automatically combines them into one picture, which is the final product, glare-free. This process also helps maintain a good resolution.

PhotoScan does more than just scanning the image, it reworks it and recreates it digitally analyzing its parts, pixels, and making decisions on color and other factors thanks to computational photography and machine learning.

Source: Google